(字句解析ツール)

http://www.sw.it.aoyama.ac.jp/2019/Compiler/lecture5.html

© 2005-19 Martin J. Dürst 青山学院大学

flexflex exercisesBring your notebook PC (with flex, bison,

gcc, make, diff, and m4

installed and usable)

Deadline (changed!): May 23, 2018 (Thursday), 19:00

(If you already submitted problems 1/2, just submit problem 3 on a separate

sheet of paper.)

Where to submit: Box in front of room O-529 (building O, 5th floor)

Format: A4 single page (using both sides is okay; NO cover page), easily readable handwriting (NO printouts), name (kanji and kana) and student number at the top right

| 0 | 1 | |

| →T | G | H |

| *G | K | L |

| *H | M | K |

| *K | K | K |

| *L | M | K |

| M | L | - |

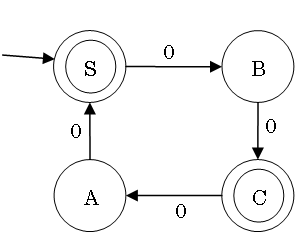

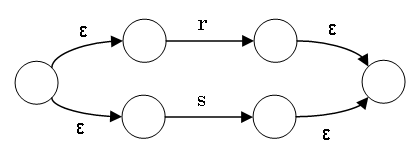

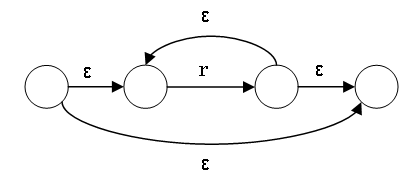

ab|c*dThese all have the same power, describe/recognize regular languages, and can be converted into each other.

getNextToken())Choices:

flex)flexlex, with various extensionslex: Lexical analyzer generator available with Unix

(creator: Mike Lesk)bison(reminder)

ls: list the files in a directorymkdir: create a new directorycd: change (working) directorypwd: print (current) working directorygcc: compile a C program./a: execute a compiled program(reminder)

C:\cygwin C:\cygwin can be reachedC:\cygwin\home\user1/home/user1 with

pwdC:\cygwin:cd /cygdrive/c (change directory to the C drive of MS

Windows)flex Usage Stepsflex (a (f)lex file), with the

extension .l (example: test.l)flex to convert test.l to a C program:flex test.llex.yy.c)lex.yy.c with a C compiler (maybe together with

other files)flexCall the yylex() function once from the main

function

Repeatedly call yylex() from the parser, and return a token

with return

In today's exercises and homework, we will use 1.

Later in this course, we will use 2. together with bison.

flex Input Formatint num_lines = 0, num_chars = 0; %% \n ++num_lines; ++num_chars; . ++num_chars;

%% int main(void) { yylex(); printf( "# of lines = %d, # of chars = %d\n", num_lines, num_chars ); } int yywrap () { return 1; }

flex Exercise 1Process and execute the flex program on the previous slide

test.l and copy the contents of the previous

slide to the filelex.yy.c withflex test.la.exe withgcc lex.yy.c./a <fileflex Input Formatdeclarations,... (C program language)

declarations,... (C program language)

%%

regexp statement (C program language)

regexp statement (C program language)

%%

functions,... (C program language)

functions,... (C program language)

flex Input FormatMixture of flex-specific instructions and C program

fragments

Three main parts, separated by two %%:

#includes, #definesNewlines and indent can be significant!

flexCaution: A manual is not a novel.

flex (lex.yy.c)flex for different inputsflex (flex also uses

lexical analysis, which is written using flex)flex

-v)flex Works.l file).l fileflex Works.l

fileflex Exercise 2The table below shows how to escape various characters in XML

Create a program in flex (for this conversion, and) for the

reverse conversion

| Raw text | XML escapes |

|---|---|

' |

' |

" |

" |

& |

& |

< |

< |

> |

> |

flex Exercise 3: Detect NumbersCreate a program with flex to output the input without changes,

except that numbers are enclosed with >>> and

<<<

Example input:

abc123def345gh

Example output:

abc>>>123<<<def>>>345<<<gh

Hint: The string recognized by a regular expression is available with the

variable yytext

flex Exercise 4 (Homework):Deadline: May 30, 2019 (Thursday), 19:00

Where to submit: Box in front of room O-529, available starting May 24

(start early, so that you can ask questions on May 24)

Format:

flex input file

(.l file)Collaboration: The same rules as for Computer Practice I (計算機実習 I) apply

flex Exercise 4 (Homework):XML (see W3C XML

Recommendation) is a generalization of HTML for document and data formats.

XML is stricter than HTML (e.g. attribute values are always quoted,...). Using

flex, create a program that takes an arbitrary XML file as input

and outputs its syntactic components, one component per line.

Simple example input: <letter>Hello & Happy

World!</letter>

Simple example output:

Start tag: <letter> Contents: Hello Entity: & Contents: Happy World! End tag: </letter>

Details:

flex.l file, flex may

still run without errors

Solution: Always start with flex:

> flex file.l && gcc lex.yy.c && ./a

<input.txt

.l file (before the first

%%), C program fragments have to be indented by at least one

space int yywrap () { return 1; }yytextputchar(yytext[0]); will output the first character

of the matched text\ or quoted within ""There will be a minitest (30 minutes) next week (Friday, May 24). Please prepare well!